As All Day DevOps 2018 kicked off, one of the day’s first sessions was by Dr. Martino Fornasa, DevOps Lead at Kiratech, who explained how to build a local development environment with Kubernetes-based containers.

Let’s dive into the key points that he made.

Many developers are already deploying software on containers. But what about local development? Fornasa has tried to get as close as possible to production when developing locally.

Having a good local development workflow is about:

- Easier onboarding for new developers

- Consistent software dependency management

- Achieving production parity as much as possible

- Running tests locally (faster than having to wait for the CI server)

- Building knowledge

But creating a local development environment is hard. Each developer has a different way of working and each technology stack has its own specifics.

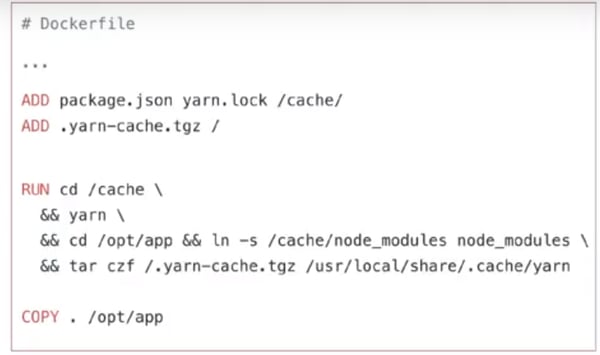

For example, when using Docker and Node, you might want to cache the node modules:

So as you can imagine, working with containers requires some language and technology specific knowledge.

A few years ago, Fornasa rolled his own solution, as there wasn’t much around. He used Docker volume binding to sync his local code directory with the application directory on the container. This worked nice and fast. Fornasa asked the other developers to use the same mechanism.

What could possibly go wrong? Well, a lot. There were speed issues and sync issues, and a lot of garbage was left on the local machine afterwards. Also, if developers didn’t or couldn’t give enough resources to the containers, this didn’t work efficiently. So people hated it.

To solve this, Fornasa came up with some implementation goals:

- Carefully tune for performance and reduce friction

- Make it easy for people to do the right thing

- Allow them to use their own tools

When Fornasa built his own tools, he used only Docker. But now, you can run Kubernetes locally, and so we can get the production parity to the next level. We live in exciting times, and the tooling is constantly improving.

But before you begin with local Kubernetes, you should ask yourself if you really want to. Some smaller applications might not reap a lot of benefit. But when your application becomes more complex, containers and Kubernetes will make it easier to set up complex environments (databases, caches, search engines, etc).

Another benefit is that you can have the same tools for development and deployment. It also enables microservices and allows each dev to run the full system (possibly with external dependencies). Finally, a CI can build the final image and enforce policies.

Fornasa then goes on to explain three approaches.

Auto rebuild and deploy

The first is an approach that can be run on a remote cluster or locally. On each code change, this approach rebuilds the image and deploys it. Fornasa calls this “auto rebuild and deploy.” Skaffold and Draft are tools that come to mind here.

This approach has a higher guarantee of correctness. On the other hand, it can have speed issues because you’re rebuilding the entire image.

Draft supports several languages like .NET, Go, Node, PHP, Java, Python, and Ruby. It uses Helm under the hood and can generate your Dockerfile and Helm Chart. According to Fornasa, Draft still misses a system for full automation, like monitoring file changes.

Skaffold on the other hand can detect changes and then run a build and deploy. It’s basically automatic. Although they have less automatic initialization than Draft, they have great documentation, according to Fornasa. Skaffold also supports the second approach (see below), where it synchronizes the files to the cluster.

Run on cluster + synchronize filesystem

The second approach runs on the cluster and synchronizes the filesystem. This generally works faster, especially for interpreted languages where a complete rebuild is not always necessary.

A downside is that you have a higher chance of drifting from the image built by the CI.

As mentioned, Skaffold can take this approach. Ksync is another tool Fornasa mentioned.

Ksync runs a local service (or daemon) that interacts with the Docker daemon. It syncs files with the container. In the future, Ksync will also be able to execute remote commands. This could be useful if you need to recompile on the container.

Run locally + bridge network

In this third approach, you run your code locally and develop against remote resources. You then bridge the network so that it seems like your code runs in the cluster.

Telepresence is a tool that allows you to run your processes or services locally but still access services in a cloud-based Kubernetes cluster—just like they were running in your local cluster.

With these three interesting approaches, Fornasa concluded his talk on different strategies and tools that can help you build a local development environment when you’re working with containers and Kubernetes.

***

About the author, Peter Morlion:

Peter is an experienced software developer, across a range of different languages, specializing in getting legacy code back up to modern standards. Based in Belgium, he’s fluent with TDD, CQRS and other modern software development standards. Connect with Peter at @petermorlion.

.png?width=610&name=J1_ModernCybersecurityBook_Promo%201200x628%20v2@2x%20(1).png)